前端 - 爬虫爬取到标签内容有时为空有时正常,请问怎么解决?

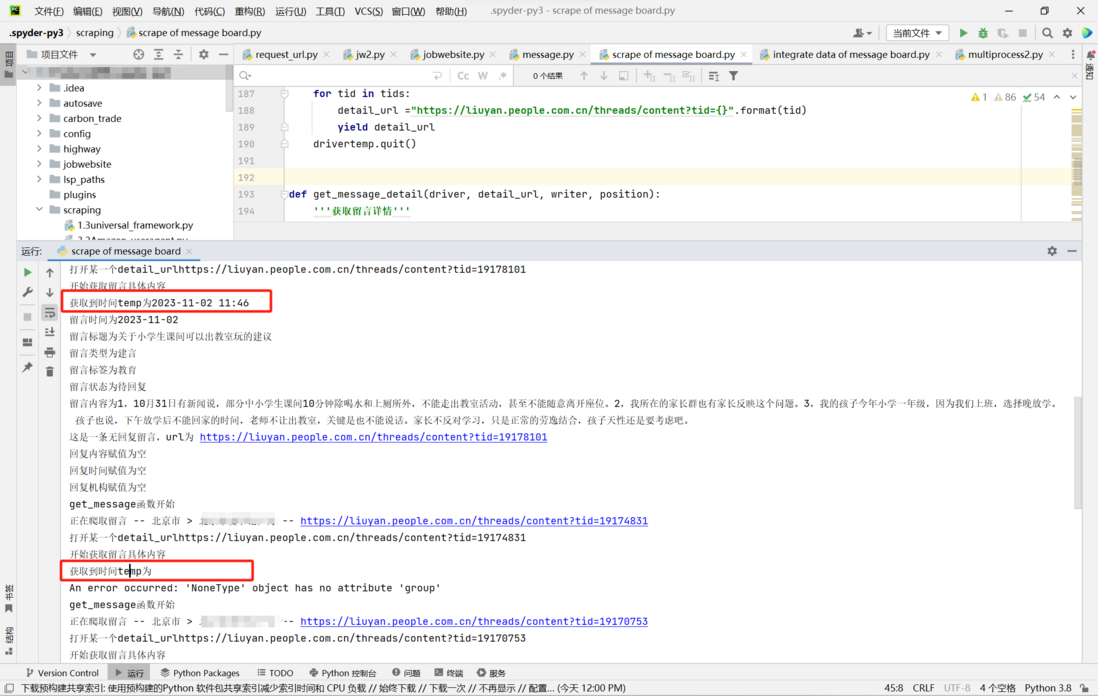

在爬取人民网领导留言板数据时,在留言详情页面按照xpath提取留言时间的信息,但是有的留言可以提取出时间,有的留言提取出来是空,看起来非常随机,不明白这是为什么...当提取时间内容为空时,反复提取十几次,有时候是三十几次,又可以提取出来,不知道这是为什么?应该如何解决呢

此外不知道大家还有没有什么可以提高爬取速度的修改建议,或者可以实现爬取一部分存储一部分,中断后可以继续爬取不用从头再来的修改建议,希望能指点一下

问题图片:

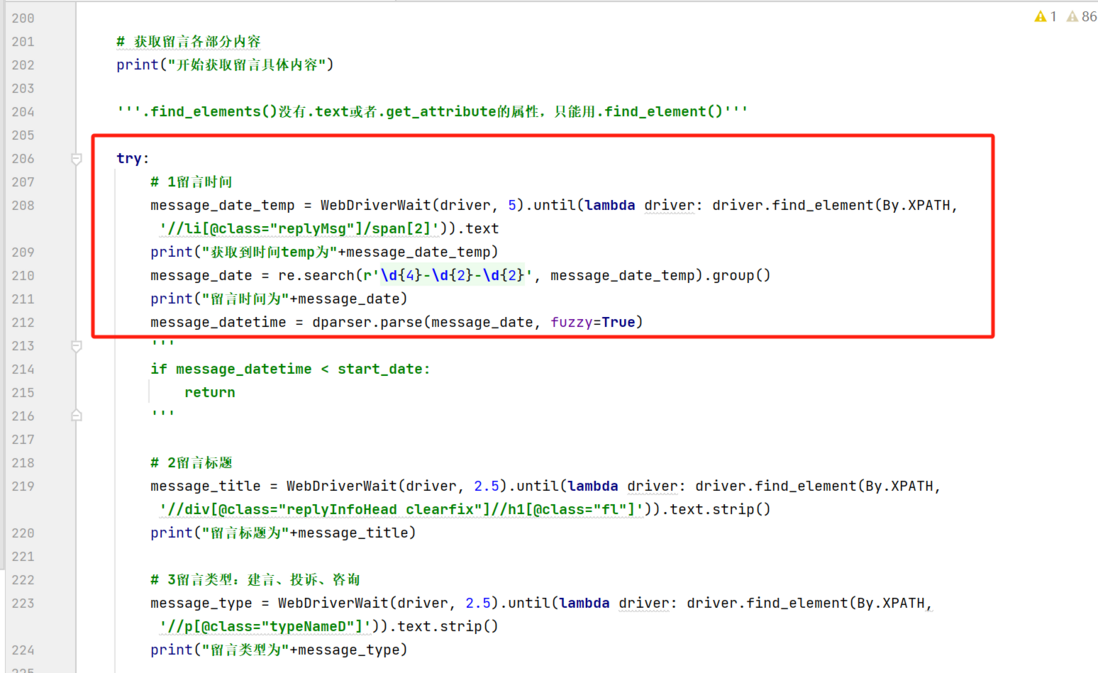

代码图片:

网页网址:

https://liuyan.people.com.cn/threads/list?fid=539

代码为:

# !/user/bin/env python3# -*- coding: utf-8 -*-# 多进程版本import csvimport osimport randomimport reimport timeimport tracebackimport dateutil.parser as dparserfrom random import choicefrom multiprocessing import Poolfrom selenium import webdriverfrom selenium.webdriver.common.by import Byfrom selenium.webdriver.support import expected_conditions as ECfrom selenium.webdriver.support.ui import WebDriverWaitfrom selenium.webdriver.chrome.options import Optionsfrom selenium.common.exceptions import TimeoutException# 时间节点start_date = dparser.parse('2023-11-01')# 浏览器设置选项chrome_options = Options()chrome_options.add_argument('blink-settings=imagesEnabled=false')def get_time(): '''获取随机时间''' return round(random.uniform(3, 6), 1)def get_user_agent(): '''获取随机用户代理''' user_agents = [ "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)", "Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)", "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)", "Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)", "Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6", "Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0", "Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5", "Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11", "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20", "Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.11 TaoBrowser/2.0 Safari/536.11", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.71 Safari/537.1 LBBROWSER", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; LBBROWSER)", "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E; LBBROWSER)", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.84 Safari/535.11 LBBROWSER", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; QQBrowser/7.0.3698.400)", "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Trident/4.0; SV1; QQDownload 732; .NET4.0C; .NET4.0E; 360SE)", "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)", "Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1", "Mozilla/5.0 (iPad; U; CPU OS 4_2_1 like Mac OS X; zh-cn) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8C148 Safari/6533.18.5", "Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:2.0b13pre) Gecko/20110307 Firefox/4.0b13pre", "Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:16.0) Gecko/20100101 Firefox/16.0", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.11 (KHTML, like Gecko) Chrome/23.0.1271.64 Safari/537.11", "Mozilla/5.0 (X11; U; Linux x86_64; zh-CN; rv:1.9.2.10) Gecko/20100922 Ubuntu/10.10 (maverick) Firefox/3.6.10", "MQQBrowser/26 Mozilla/5.0 (Linux; U; Android 2.3.7; zh-cn; MB200 Build/GRJ22; CyanogenMod-7) AppleWebKit/533.1 (KHTML, like Gecko) Version/4.0 Mobile Safari/533.1", "Mozilla/5.0 (iPhone; CPU iPhone OS 9_1 like Mac OS X) AppleWebKit/601.1.46 (KHTML, like Gecko) Version/9.0 Mobile/13B143 Safari/601.1", "Mozilla/5.0 (Linux; Android 5.1.1; Nexus 6 Build/LYZ28E) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/48.0.2564.23 Mobile Safari/537.36", "Mozilla/5.0 (iPod; U; CPU iPhone OS 2_1 like Mac OS X; ja-jp) AppleWebKit/525.18.1 (KHTML, like Gecko) Version/3.1.1 Mobile/5F137 Safari/525.20", "Mozilla/5.0 (Linux;u;Android 4.2.2;zh-cn;) AppleWebKit/534.46 (KHTML,like Gecko) Version/5.1 Mobile Safari/10600.6.3 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)", "Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)" ] # 在user_agent列表中随机产生一个代理,作为模拟的浏览器 user_agent = choice(user_agents) return user_agentdef get_fid(): '''获取所有领导id''' with open('省级领导.txt', 'r') as f: content = f.read() fids = content.split() return fidsdef get_detail_urls(position, list_url): '''获取每个领导的所有留言链接''' print("get_detail_Url开始") user_agent = get_user_agent() chrome_options.add_argument('user-agent=%s' % user_agent) drivertemp = webdriver.Chrome(options=chrome_options) drivertemp.maximize_window() drivertemp.get(list_url) print("在detail函数中temp 打开了网页") time.sleep(2) tids = [] # 建言:循环加载页面 while True: try: next_page_button = WebDriverWait(drivertemp, 15, 2).until(EC.element_to_be_clickable((By.CLASS_NAME, "mordList"))) datestr = WebDriverWait(drivertemp, 10).until(lambda driver: driver.find_element(By.XPATH, '//*[@class="replyList"]/li[last()]/div[2]/div[1]/p')).text.strip() datestr = re.search(r'\d{4}-\d{2}-\d{2}', datestr).group() date = dparser.parse(datestr, fuzzy=True) print('爬取detailurl --', position, '--', date) # 模拟点击加载 if date > start_date: next_page_button.click() else: break except TimeoutException: '''有时候网不好会超时,这时候就重新再访问一次''' drivertemp.quit() get_detail_urls(position, list_url) time.sleep(get_time()) message_elements_label1 = drivertemp.find_elements(By.XPATH, '//div[@class="headMainS fl"]//span[@class="t-mr1 t-ml1"]') for element in message_elements_label1: tid = element.text.strip().split(':')[-1] tids.append(tid) # 投诉/求助:循环加载页面 WebDriverWait(drivertemp, 50, 2).until(EC.element_to_be_clickable((By.ID, "tab-second"))).click() while True: try: next_page_button = WebDriverWait(drivertemp, 50, 2).until(EC.element_to_be_clickable((By.CLASS_NAME, "mordList"))) datestr = WebDriverWait(drivertemp, 10).until(lambda driver: driver.find_element(By.XPATH, '//*[@class="replyList"]/li[last()]/div[2]/div[1]/p')).text.strip() datestr = re.search(r'\d{4}-\d{2}-\d{2}', datestr).group() date = dparser.parse(datestr, fuzzy=True) print('爬取detailurl --', position, '--', date) # 模拟点击加载 if date > start_date: next_page_button.click() else: break except TimeoutException: '''有时候网不好会超时,这时候就重新再访问一次''' drivertemp.quit() get_detail_urls(position, list_url) time.sleep(get_time()) message_elements_label2 = drivertemp.find_elements(By.XPATH, '//div[@class="headMainS fl"]//span[@class="t-mr1 t-ml1"]') for element in message_elements_label2: tid = element.text.strip().split(':')[-1] tids.append(tid) # 咨询:循环加载页面 WebDriverWait(drivertemp, 50, 2).until(EC.element_to_be_clickable((By.ID, "tab-third"))).click() while True: try: next_page_button = WebDriverWait(drivertemp, 50, 2).until(EC.element_to_be_clickable((By.CLASS_NAME, "mordList"))) datestr = WebDriverWait(drivertemp, 10).until(lambda driver: driver.find_element(By.XPATH, '//*[@class="replyList"]/li[last()]/div[2]/div[1]/p')).text.strip() datestr = re.search(r'\d{4}-\d{2}-\d{2}', datestr).group() date = dparser.parse(datestr, fuzzy=True) print('爬取detailurl --', position, '--', date) # 模拟点击加载 if date > start_date: next_page_button.click() else: break except TimeoutException: '''有时候网不好会超时,这时候就重新再访问一次''' drivertemp.quit() get_detail_urls(position, list_url) time.sleep(get_time()) message_elements_label3 = drivertemp.find_elements(By.XPATH, '//div[@class="headMainS fl"]//span[@class="t-mr1 t-ml1"]') for element in message_elements_label3: tid = element.text.strip().split(':')[-1] tids.append(tid) # 获取所有链接 print(position+"的tid列表为"+str(tids)) for tid in tids: detail_url ="https://liuyan.people.com.cn/threads/content?tid={}".format(tid) yield detail_url drivertemp.quit()def get_message_detail(driver, detail_url, writer, position): '''获取留言详情''' print("get_message函数开始") print('正在爬取留言 --', position, '--', detail_url) driver.get(detail_url) print("打开某一个detail_url"+detail_url) # 获取留言各部分内容 print("开始获取留言具体内容") '''.find_elements()没有.text或者.get_attribute的属性,只能用.find_element()''' try: # 1留言时间 message_date_temp = WebDriverWait(driver, 5).until(lambda driver: driver.find_element(By.XPATH, '//li[@class="replyMsg"]/span[2]')).text print("获取到时间temp为"+message_date_temp) message_date = re.search(r'\d{4}-\d{2}-\d{2}', message_date_temp).group() print("留言时间为"+message_date) message_datetime = dparser.parse(message_date, fuzzy=True) ''' if message_datetime < start_date: return ''' # 2留言标题 message_title = WebDriverWait(driver, 2.5).until(lambda driver: driver.find_element(By.XPATH, '//div[@class="replyInfoHead clearfix"]//h1[@class="fl"]')).text.strip() print("留言标题为"+message_title) # 3留言类型:建言、投诉、咨询 message_type = WebDriverWait(driver, 2.5).until(lambda driver: driver.find_element(By.XPATH,'//p[@class="typeNameD"]')).text.strip() print("留言类型为"+message_type) # 4留言标签:城建、医疗、... message_label = WebDriverWait(driver, 2.5).until(lambda driver: driver.find_element(By.XPATH,'//p[@class="domainName"]')).text.strip() print("留言标签为"+message_label) # 5留言状态:已回复、已办理、未回复、办理中 message_state = WebDriverWait(driver, 2.5).until(lambda driver: driver.find_element(By.XPATH,'//p[@class="stateInfo"]')).text.strip() print("留言状态为"+message_state) # 6留言内容 message_content = WebDriverWait(driver, 2.5).until(lambda driver: driver.find_element(By.XPATH, '//div[@class="clearfix replyContent"]//p[@id="replyContentMain"]')).text.strip() print("留言内容为"+message_content) try: # 7回复内容 reply_content = WebDriverWait(driver, 2.5).until(lambda driver: driver.find_element(By.XPATH, '//div[@class="replyHandleMain fl"]//p[@class="handleContent noWrap sitText"]')).text.strip() print("回复内容为" + reply_content) # 8回复时间 reply_date_temp = WebDriverWait(driver, 2.5).until( lambda driver: driver.find_element(By.XPATH, '//div[@class="handleTime"]')).text reply_date = re.search(r'\d{4}-\d{2}-\d{2}', reply_date_temp).group() print("回复时间为" + reply_date) # 9回复机构 reply_institute = WebDriverWait(driver, 2.5).until( lambda driver: driver.find_element(By.XPATH, '//div[@class="replyHandleMain fl"]/div/h4')).text.strip() print("回复机构为" + reply_institute) except: print("这是一条无回复留言,url为 "+str(detail_url)) reply_content = "" print("回复内容赋值为空") reply_date = "" print("回复时间赋值为空") reply_institute = "" print("回复机构赋值为空") # 存入CSV文件 writer.writerow([position, message_title, message_type, message_label, message_datetime, message_content, reply_content, reply_date,reply_institute]) except Exception as e: print(f"An error occurred: {str(e)}") # 页面加载失败,刷新页面 driver.refresh() # get_message_detail(driver, detail_url, writer, position) time.sleep(3) # 等待5秒,或根据需要进行调整def get_officer_messages(args): '''获取并保存领导的所有留言''' print("get_officer_messagages开始执行") user_agent = get_user_agent() chrome_options.add_argument('user-agent=%s' % user_agent) driver = webdriver.Chrome(options=chrome_options) index, fid = args list_url = "http://liuyan.people.com.cn/threads/list?fid={}".format(fid) driver.get(list_url) #浏览器中加载url try: position = WebDriverWait(driver, 10).until(lambda driver: driver.find_element(By.XPATH, "/html/body/div[1]/div[2]/main/div/div/div[2]/div/div[1]/h2")).text print(index, '-- officer --', position) start_time = time.time() csv_name = str(fid) + '.csv' # 文件存在则删除重新创建 if os.path.exists(csv_name): os.remove(csv_name) with open(csv_name, 'a+', newline='', encoding='gb18030') as f: writer = csv.writer(f, dialect="excel") writer.writerow(['职位', '留言标题', '留言类型', '留言标签', '留言日期', '留言内容', '回复内容', '回复日期', '回复人']) for detail_url in get_detail_urls(position, list_url): get_message_detail(driver, detail_url, writer, position) time.sleep(get_time()) end_time = time.time() crawl_time = int(end_time - start_time) crawl_minute = crawl_time // 60 crawl_second = crawl_time % 60 print(position, '已爬取结束!!!') print('该领导用时:{}分钟{}秒。'.format(crawl_minute, crawl_second)) driver.quit() time.sleep(5) except: driver.quit() get_officer_messages(args)def main(): '''主函数''' fids = get_fid() print('爬虫程序开始执行:') s_time = time.time() # 处理传入的参数,使之对应索引合并并且可迭代 itera_merge = list(zip(range(1, len(fids) + 1), fids)) # 创建进程池 pool = Pool(3) # 将任务传入进程池并通过映射传入参数 pool.map(get_officer_messages, itera_merge) print('爬虫程序执行结束!!!') e_time = time.time() c_time = int(e_time - s_time) c_minute = c_time // 60 c_second = c_time % 60 print('{}位领导共计用时:{}分钟{}秒。'.format(len(fids), c_minute, c_second))if __name__ == '__main__': '''执行主函数''' main()共有2个答案

xpath唯一,偶现爬取不到时间的话,大概率就是页面元素还没有渲染出来,建议采取楼上的意见采用接口爬取,你要排查你的问题的话,你可以把wait时间设置长一点试试还会不会有问题

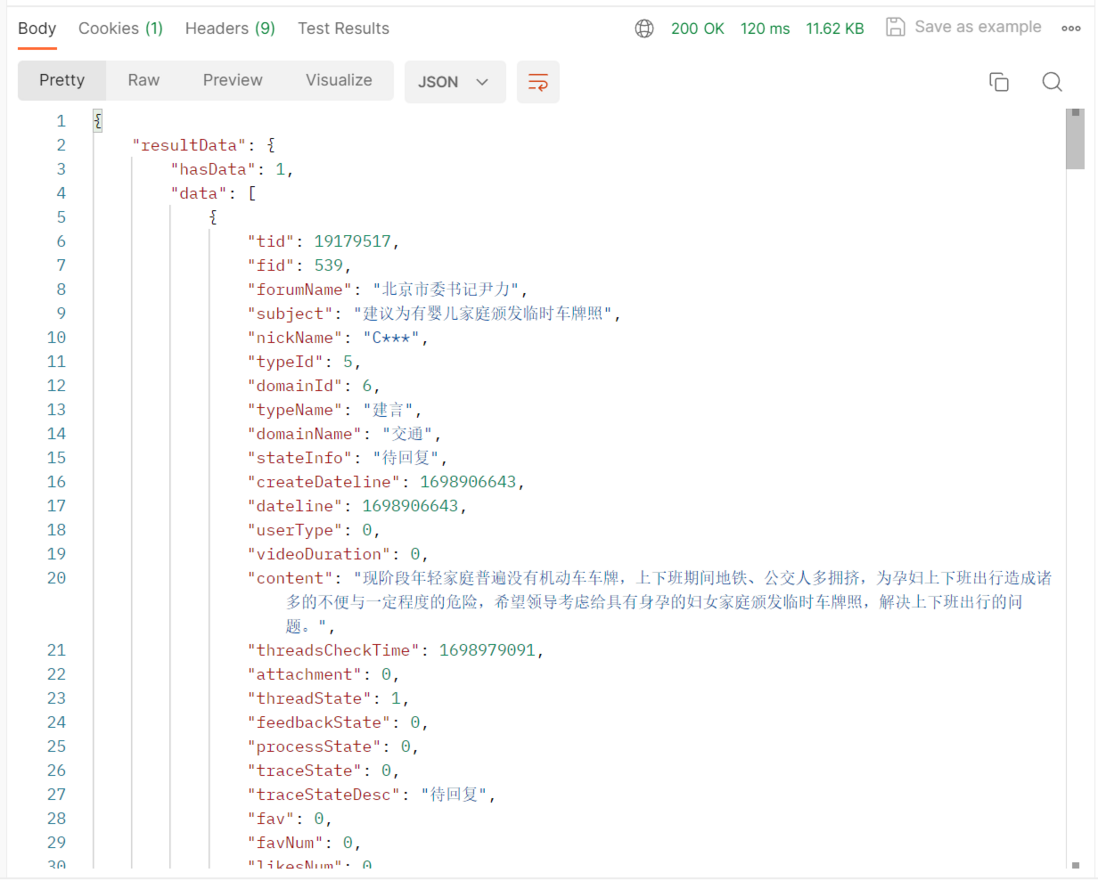

个人觉得可以直接试试爬接口

import requestsurl = "https://liuyan.people.com.cn/v1/threads/list/df"payload = "{\r\n \"appCode\": \"PC42ce3bfa4980a9\",\r\n \"token\": \"\",\r\n \"signature\": \"74de794b99edb6acabc1ce088697504c\",\r\n \"param\": \"{\\\"fid\\\":\\\"539\\\",\\\"showUnAnswer\\\":1,\\\"typeId\\\":5,\\\"lastItem\\\":\\\"\\\",\\\"position\\\":0,\\\"rows\\\":10,\\\"orderType\\\":2}\"\r\n}"headers = { 'Accept': 'application/json, text/plain, */*', 'Accept-Language': 'zh-CN', 'Cache-Control': 'no-cache', 'Connection': 'keep-alive', 'Content-Type': 'application/json;charset=UTF-8', 'Cookie': 'wdcid=2cc467a9d1b73009; __jsluid_h=c785543073cdfb172a4615be09872320; sfr=1; __jsluid_s=52de5ba57ef501661168f96960f8d1c2; Hm_lvt_40ee6cb2aa47857d8ece9594220140f1=1698999857; language=zh-CN; deviceId=425032c4-6e87-46a8-8547-a85b050ea3c0; Hm_lpvt_40ee6cb2aa47857d8ece9594220140f1=1699000123; __jsluid_s=99d5863c7d4e9985a0ef216b715b00c1', 'Origin': 'https://liuyan.people.com.cn', 'Pragma': 'no-cache', 'Referer': 'https://liuyan.people.com.cn/threads/list?fid=539', 'Sec-Fetch-Dest': 'empty', 'Sec-Fetch-Mode': 'cors', 'Sec-Fetch-Site': 'same-origin', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/118.0.0.0 Safari/537.36', 'sec-ch-ua': '"Chromium";v="118", "Google Chrome";v="118", "Not=A?Brand";v="99"', 'sec-ch-ua-mobile': '?0', 'sec-ch-ua-platform': '"Windows"', 'sec-gpc': '1', 'token': ''}response = requests.request("POST", url, headers=headers, data=payload)print(response.text)

-

原始content: decode('utf-8')报错: UnicodeDecodeError: 'utf-8' codec can't decode byte 0xe8 in position 1: invalid continuation byte decode('utf-8', 'ignore'): decode('gbk', 'ignore'): decode('utf-16', 'ig

-

本文向大家介绍详解Python爬虫爬取博客园问题列表所有的问题,包括了详解Python爬虫爬取博客园问题列表所有的问题的使用技巧和注意事项,需要的朋友参考一下 一.准备工作 首先,本文使用的技术为 python+requests+bs4,没有了解过可以先去了解一下。 我们的需求是将博客园问题列表中的所有问题的题目爬取下来。 二.分析: 首先博客园问题列表页面右键点击检查 通过Element查找

-

有的时候,当我们的爬虫程序完成了,并且在本地测试也没有问题,爬取了一段时间之后突然就发现报错无法抓取页面内容了。这个时候,我们很有可能是遇到了网站的反爬虫拦截。 我们知道,网站一方面想要爬虫爬取网站,比如让搜索引擎爬虫去爬取网站的内容,来增加网站的搜索排名。另一方面,由于网站的服务器资源有限,过多的非真实的用户对网站的大量访问,会增加运营成本和服务器负担。 因此,有些网站会设置一些反爬虫的措施。我

-

我看做过切片所爬取的还是很全的

-

python应用最多的场景还是web快速开发、爬虫、自动化运维:写过简单网站、写过自动发帖脚本、写过收发邮件脚本、写过简单验证码识别脚本。 爬虫在开发过程中也有很多复用的过程,这里总结一下,以后也能省些事情。

-

这一章将会介绍使用一些新的模块(optparse,spider)去完成一个爬虫的web应用。爬虫其实就是一个枚举出一个网站上面的所有链接,以帮助你创建一个网站地图的web应用程序。而使用Python则可以很快的帮助你开发出一个爬虫脚本. 你可以创建一个爬虫脚本通过href标签对请求的响应内容进行解析,并且可以在解析的同时创建一个新的请求,你还可以直接调用spider模块来实现,这样就不需要自己去写