ubuntu 22.04中音视频合成?

需求:

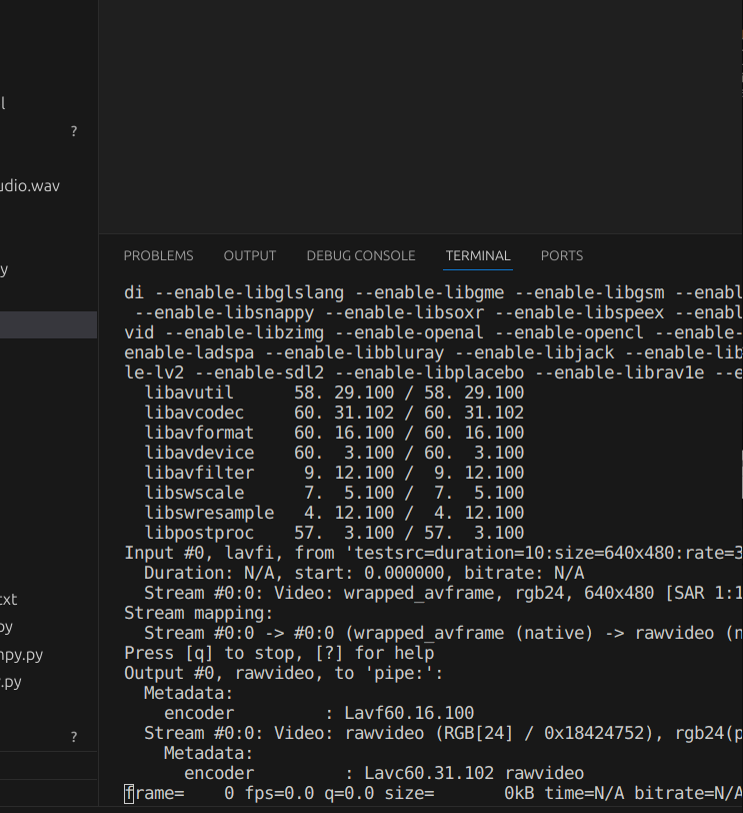

声音动态生成, 视频固定来源. 代码中使用的是testsrc. 代码一直卡在rawvedio写入命名管道哪也没撒错误

示例代码:

import subprocessimport osfrom threading import Threadimport numpy as npfrom transformers import VitsModel, VitsTokenizer, PreTrainedTokenizerBaseimport torchimport ffmpegdef read_frame_from_stdout(vedioProcess, width, height): frame_size = width * height * 3 input_bytes = vedioProcess.stdout.read(frame_size) if not input_bytes: return assert len(input_bytes) == frame_size return np.frombuffer(input_bytes, np.uint8).reshape([height, width, 3])def writer(vedioProcess, pipe_name, chunk_size): width = 640 height = 480 while True: input_frame = read_frame_from_stdout(vedioProcess, width, height) print('read frame is:' % input_frame) if input_frame is None: print('read frame is: None') break frame = input_frame * 0.3 os.write(fd_pipe, (frame.astype(np.uint8).tobytes())) # Closing the pipes as closing files. os.close(fd_pipe) # 加载TTS模型def loadModel(device: str): model = VitsModel.from_pretrained("./mms-tts-eng", local_files_only=True).to(device) # acebook/mms-tts-deu tokenizer = VitsTokenizer.from_pretrained("./mms-tts-eng", local_files_only=True) return model, tokenizer# 将32位浮点转成16位整数, 适用于:16000(音频采样率)def covertFl32ToInt16(nyArr): return np.int16(nyArr / np.max(np.abs(nyArr)) * 32767)def audioWriteInPipe(nyArr, audioPipeName): # Write to named pipe as writing to a file (but write the data in small chunks). os.write(audioPipeName, covertFl32ToInt16(nyArr.squeeze()).tobytes()) # Write 1024 bytes of data to fd_pipe# 生成numpydef generte(prompt:str, device: str, model: VitsModel, tokenizer: PreTrainedTokenizerBase): inputs = tokenizer(prompt, return_tensors="pt").to(device) # with torch.no_grad(): # output = model(**inputs).waveform return output.cpu().numpy()def soundPipeWriter(model, device, tokenizer, pipeName): fd_pipe = os.open(pipeName, os.O_WRONLY) filepath = 'night.txt' for content in read_file(filepath): print(content) audioWriteInPipe(generte(prompt=content, device=device, model=model, tokenizer=tokenizer), audioPipeName=fd_pipe) os.close(fd_pipe)# 读取文件源def read_file(filepath:str): with open(filepath) as fp: for content in fp: yield contentdef record(vedioProcess, model, tokenizer, device): # Names of the "Named pipes" pipeA = "audio_pipe1" pipeV = "vedio_pipe2" # Create "named pipes". os.mkfifo(pipeA) os.mkfifo(pipeV) # Open FFmpeg as sub-process # Use two audio input streams: # 1. Named pipe: "audio_pipe1" # 2. Named pipe: "audio_pipe2" process = ( ffmpeg .concat(ffmpeg.input("pipe:vedio_pipe2"), ffmpeg.input("pipe:audio_pipe1"), v=1, a=1) .output("merge_audio_vedio.mp4", pix_fmt='yuv480p', vcodec='copy', acodec='aac') .run_async(pipe_stderr=True) ) # Initialize two "writer" threads (each writer writes data to named pipe in chunks of 1024 bytes). thread1 = Thread(target=soundPipeWriter, args=(model, device, tokenizer, pipeA)) # thread1 writes samp1 to pipe1 thread2 = Thread(target=writer, args=(vedioProcess, pipeV, 1024)) # thread2 writes samp2 to pipe2 # Start the two threads thread1.start() thread2.start() # Wait for the two writer threads to finish thread1.join() thread2.join() process.wait() # Wait for FFmpeg sub-process to finish # Remove the "named pipes". os.unlink(pipeV) os.unlink(pipeA)if __name__ == "__main__": device: str = "cuda:0" if torch.cuda.is_available() else "cpu" model, tokenizer = loadModel(device=device) # make lavfi-testSrc 60s mp4 vedioProcess = ( ffmpeg .input('testsrc=duration=10:size=640x480:rate=30', f="lavfi", t=60) .output('pipe:', format='rawvideo', pix_fmt='rgb24') .run_async(pipe_stdout=True) ) # record(vedioProcess, model, tokenizer, device) vedioProcess.wait()vscode中截图:

共有2个答案

视频卡住是因为编码不对.用h264都可以过了.声音输入不可用是因为没有编码。 完整的示例如下:

import subprocessimport osfrom threading import Threadimport numpy as npfrom transformers import VitsModel, VitsTokenizer, PreTrainedTokenizerBaseimport torchimport ffmpeg# 写视频def writer(data, pipe_name, chunk_size): dataLength = len(data) print('arg data is print') print('data length:%d, chunk size:%d' % (dataLength, chunk_size)) # Open the pipes as opening files (open for "open for writing only"). fd_pipe = os.open(pipe_name, os.O_WRONLY) # fd_pipe1 is a file descriptor (an integer) for i in range(0, dataLength, chunk_size): print('start write start:%d, finish:%d' % (i, chunk_size+i)) # Write to named pipe as writing to a file (but write the data in small chunks). os.write(fd_pipe, data[i:chunk_size+i]) # Write 1024 bytes of data to fd_pipe print('writing...') # Closing the pipes as closing files. os.close(fd_pipe)# 加载TTS模型def loadModel(device: str): #model = VitsModel.from_pretrained("facebook/mms-tts-eng").to(device) # acebook/mms-tts-deu #tokenizer = VitsTokenizer.from_pretrained("facebook/mms-tts-eng") model = VitsModel.from_pretrained("./mms-tts-eng", local_files_only=True).to(device) # acebook/mms-tts-deu tokenizer = VitsTokenizer.from_pretrained("./mms-tts-eng", local_files_only=True) return model, tokenizer# 将32位浮点转成16位整数, 适用于:16000(音频采样率)def covertFl32ToInt16(nyArr): return np.int16(nyArr / np.max(np.abs(nyArr)) * 32767)def audioWriteInPipe(nyArr, audioPipeName): # Write to named pipe as writing to a file (but write the data in small chunks). os.write(audioPipeName, covertFl32ToInt16(nyArr.squeeze()).tobytes()) # Write 1024 bytes of data to fd_pipe# 生成numpydef generte(prompt:str, device: str, model: VitsModel, tokenizer: PreTrainedTokenizerBase): inputs = tokenizer(prompt, return_tensors="pt").to(device) # with torch.no_grad(): # output = model(**inputs).waveform return output.cpu().numpy()def soundPipeWriter(model, device, tokenizer, pipeName): fd_pipe = os.open(pipeName, os.O_WRONLY) filepath = 'night.txt' for content in read_file(filepath): print(content) audioWriteInPipe(generte(prompt=content, device=device, model=model, tokenizer=tokenizer), audioPipeName=fd_pipe) os.close(fd_pipe)# 读取文件源def read_file(filepath:str): with open(filepath) as fp: for content in fp: yield contentdef record(vedioData, model, tokenizer, device): # Names of the "Named pipes" pipeA = "audio_pipe1" pipeV = "vedio_pipe2" # Create "named pipes". os.mkfifo(pipeA) os.mkfifo(pipeV) # Open FFmpeg as sub-process # Use two audio input streams: # 1. Named pipe: "audio_pipe1" # 2. Named pipe: "audio_pipe2" process = subprocess.Popen(["ffmpeg", "-i", pipeV, "-ar", "16000", "-f", "s16le", "-ac", "1", "-i", pipeA, "-c:v", "copy", "-c:a", "aac", "merge_audio_vedio.mp4"], stdin=subprocess.PIPE) # Initialize two "writer" threads (each writer writes data to named pipe in chunks of 1024 bytes). thread1 = Thread(target=soundPipeWriter, args=(model, device, tokenizer, pipeA)) # thread1 writes samp1 to pipe1 thread2 = Thread(target=writer, args=(vedioData, pipeV, 1024)) # thread2 writes samp2 to pipe2 # Start the two threads thread1.start() thread2.start() # Wait for the two writer threads to finish thread1.join() thread2.join() process.wait() # Wait for FFmpeg sub-process to finish # Remove the "named pipes". os.unlink(pipeV) os.unlink(pipeA)if __name__ == "__main__": device: str = "cuda:0" if torch.cuda.is_available() else "cpu" model, tokenizer = loadModel(device=device) # make lavfi-testSrc 60s mp4 vedioProcess = ( ffmpeg .input('testsrc=duration=10:size=640x480:rate=30', f="lavfi", t=60) .output('pipe:', format='h264', pix_fmt='rgb24') .run_async(pipe_stdout=True) ) buffer, _ = vedioProcess.communicate() # record(buffer, model, tokenizer, device) vedioProcess.wait()根据你提供的代码和描述,问题可能出在几个方面。由于你提到代码卡在了rawvideo写入命名管道的部分,这通常是因为以下几个原因:

- 命名管道没有正确创建或使用:确保你使用了正确的命名管道名称,并且在创建和写入时保持了一致性。

- FFmpeg命令格式或参数错误:确保FFmpeg命令和参数正确无误,特别是与命名管道相关的部分。

- 线程同步问题:由于你使用了多线程,可能存在线程同步问题。确保线程正确地结束,并且在结束之前已经将所有数据写入管道。

- FFmpeg进程未正确启动或结束:检查

vedioProcess是否成功启动,并且在record函数结束时正确关闭。 - 命名管道缓冲区问题:命名管道可能有一个缓冲区,如果写入的数据量超过了缓冲区的大小,可能会导致阻塞。

- Python与FFmpeg进程的交互问题:确保Python正确地与FFmpeg进程交互,并且FFmpeg进程能够读取从命名管道中传入的数据。

针对这些问题,以下是一些可能的解决方案:

- 检查命名管道的名称:确保你在创建和使用命名管道时使用了相同的名称。

- 验证FFmpeg命令:你可以尝试直接在命令行中运行你的FFmpeg命令,以验证其是否正确工作。

- 同步线程:确保在关闭命名管道和结束FFmpeg进程之前,所有的线程都已经完成了它们的工作。

- 检查FFmpeg进程的状态:在代码中添加一些日志输出,以检查

vedioProcess是否成功启动,并且在结束时是否正确地关闭了。 - 调整缓冲区大小:如果可能的话,尝试调整命名管道的缓冲区大小,以避免阻塞。

- 调试Python与FFmpeg的交互:在代码中添加更多的日志输出,以跟踪Python与FFmpeg进程的交互过程,看是否有任何异常或错误发生。

由于你的代码比较复杂,并且涉及多个组件(Python、FFmpeg、命名管道等),因此可能需要逐步调试以找到问题的根源。如果以上建议仍然无法解决问题,你可能需要更详细地检查代码中的每一个部分,并逐步缩小问题的范围。

-

我编写了代码将几个音频文件与1个图像组合成WebM视频。(audio1与默认jpg结合,audio2与默认jpg结合等)。 问题是,在此之后,视频长度比音频长度长25秒(因此音频在视频结束前缩短25秒)。 有没有办法在音频的同时停止视频?这是我使用的代码(我启动一个BAT命令文件)

-

主要内容:HTML 音频/视频 DOM 参考手册,HTML 音频/视频 方法,HTML 音频/视频属性,HTML 音频/视频事件HTML 音频/视频 DOM 参考手册 HTML5 DOM 为 <audio> 和 <video> 元素提供了方法、属性和事件。 这些方法、属性和事件允许您使用 JavaScript 来操作 <audio> 和 <video> 元素。 HTML 音频/视频 方法 方法 描述 addTextTrack() 向音频/视频添加新的文本轨道。 canPlayType() 检测浏

-

本文向大家介绍java使用FFmpeg合成视频和音频并获取视频中的音频等操作(实例代码详解),包括了java使用FFmpeg合成视频和音频并获取视频中的音频等操作(实例代码详解)的使用技巧和注意事项,需要的朋友参考一下 FFmpeg是一套可以用来记录、转换数字音频、视频,并能将其转化为流的开源计算机程序。 ffmpeg命令参数如下: 通用选项 -L license -h 帮助 -fromats 显

-

在页面上添加视频、声音、动画等,可以增强用户体验。在HTML5之前,为网页添加多媒体的唯一办法,就是使用第三方的插件(如,Adobe Flash等)。 HTML5中,提供了对多媒体的原生支持,只需通过 video元素,就可以向网页嵌入视频、电影或音频资源,通过 audio元素向网页嵌入音频资源,省时省力。 视频 早就听说HTML提供了对视频的原生支持,你可能已经迫不及待想体验一下了。 在HTML5

-

我已经使用程序youtube-dl下载了一个YouTube播放列表,我选择了单独下载视频和音频,我现在有一个文件夹充满了视频及其相应的音频,我希望与ffmpeg合并在一起。 我需要使用批处理脚本来执行此操作,但问题是youtube-dl在原始文件的标题之后添加了临时字母,因此视频与其对应的音频没有相同的名称,文件名如下所示: 如何使用windows批处理脚本和ffmpeg合并这些多个视频/音频文件

-

我想从视频中提取对齐的音频流。目标是获得与视频精确对齐的音频序列。 问题:视频和音频序列不对齐。输出音频持续时间比视频输入短。 要复制的脚本: 我的尝试(没有成功): 按照此答案中的建议添加 添加,同时导出视频(链接) 在Audacity中打开。那里的持续时间是 在VLC中打开。持续时间: 显式设置帧率 其他视频文件 如果能给我一些建议,我将不胜感激。非常感谢。