Teller - the open-source universal secret manager for developers

Never leave your terminal to use secrets while developing, testing, and building your apps.

Instead of custom scripts, tokens in your .zshrc files, visible EXPORTs in your bash history, misplaced .env.production files and more around your workstation -- just use teller and connect it to any vault, key store, or cloud service you like (Teller support Hashicorp Vault, AWS Secrets Manager, Google Secret Manager, and many more).

You can use Teller to tidy your own environment or for your team as a process and best practice.

Quick Start with teller (or tlr)

You can install teller with homebrew:

$ brew tap spectralops/tap && brew install teller

You can now use teller or tlr (if you like shortcuts!) in your terminal.

teller will pull variables from your various cloud providers, vaults and others, and will populate your current working session (in various ways!, see more below) so you can work safely and much more productively.

teller needs a tellerfile. This is a .teller.yml file that lives in your repo, or one that you point teller to with teller -c your-conf.yml.

Using a Github Action

For those using Github Action, you can have a 1-click experience of installing Teller in your CI:

- name: Setup Teller

uses: spectralops/setup-teller@v1

- name: Run a Teller task (show, scan, run, etc.)

run: teller run [args]

For more, check our setup teller action on the marketplace.

Create your configuration

Run teller new and follow the wizard, pick the providers you like and it will generate a .teller.yml for you.

Alternatively, you can use the following minimal template or view a full example:

project: project_name

opts:

stage: development

# remove if you don't like the prompt

confirm: Are you sure you want to run in {{stage}}?

providers:

# uses environment vars to configure

# https://github.com/hashicorp/vault/blob/api/v1.0.4/api/client.go#L28

hashicorp_vault:

env_sync:

path: secret/data/{{stage}}/services/billing

# this will fuse vars with the below .env file

# use if you'd like to grab secrets from outside of the project tree

dotenv:

env_sync:

path: ~/billing.env.{{stage}}

Now you can just run processes with:

$ teller run node src/server.js

Service is up.

Loaded configuration: Mailgun, SMTP

Port: 5050

Behind the scenes: teller fetched the correct variables, placed those (and just those) in ENV for the node process to use.

Best practices

Go and have a look at a collection of our best practices

Features

��

Running subprocesses

Manually exporting and setting up environment variables for running a process with demo-like / production-like set up?

Got bitten by using .env.production and exposing it in the local project itself?

Using teller and a .teller.yml file that exposes nothing to the prying eyes, you can work fluently and seamlessly with zero risk, also no need for quotes:

$ teller run -- your-process arg1 arg2... --switch1 ...

��

Inspecting variables

This will output the current variables teller picks up. Only first 2 letters will be shown from each, of course.

$ teller show

��

Local shell population

Hardcoding secrets into your shell scripts and dotfiles?

In some cases it makes sense to eval variables into your current shell. For example in your .zshrc it makes much more sense to use teller, and not hardcode all those into the .zshrc file itself.

In this case, this is what you should add:

eval "$(teller sh)"

��

Easy Docker environment

Tired of grabbing all kinds of variables, setting those up, and worried about these appearing in your shell history as well?

Use this one liner from now on:

$ docker run --rm -it --env-file <(teller env) alpine sh

⚠️

Scan for secrets

Teller can help you fight secret sprawl and hard coded secrets, as well as be the best productivity tool for working with your vault.

It can also integrate into your CI and serve as a shift-left security tool for your DevSecOps pipeline.

Look for your vault-kept secrets in your code by running:

$ teller scan

You can run it as a linter in your CI like so:

run: teller scan --silent

It will break your build if it finds something (returns exit code 1).

Use Teller for productively and securely running your processes and you get this for free -- nothing to configure. If you have data that you're bringing that you're sure isn't sensitive, flag it in your teller.yml:

dotenv:

env:

FOO:

path: ~/my-dot-env.env

severity: none # will skip scanning. possible values: high | medium | low | none

By default we treat all entries as sensitive, with value high.

♻️

Redact secrets from process outputs, logs, and files

You can use teller as a redaction tool across your infrastructure, and run processes while redacting their output as well as clean up logs and live tails of logs.

Run a process and redact its output in real time:

$ teller run --redact -- your-process arg1 arg2

Pipe any process output, tail or logs into teller to redact those, live:

$ cat some.log | teller redact

It should also work with tail -f:

$ tail -f /var/log/apache.log | teller redact

Finally, if you've got some files you want to redact, you can do that too:

$ teller redact --in dirty.csv --out clean.csv

If you omit --in Teller will take stdin, and if you omit --out Teller will output to stdout.

��

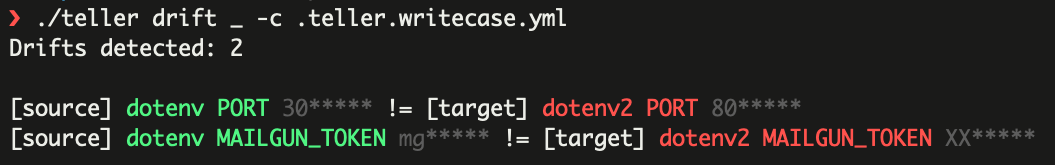

Detect secrets and value drift

You can detect secret drift by comparing values from different providers against each other. It might be that you want to pin a set of keys in different providers to always be the same value; when they aren't -- that means you have a drift.

In most cases, keys in providers would be similar which we call mirrored providers. Example:

Provider1:

MG_PASS=foo***

Provider2:

MG_PASS=foo*** # Both keys are called MG_PASS

To detected mirror drifts, we use teller mirror-drift.

$ teller mirror-drift --source global-dotenv --target my-dotenv

Drifts detected: 2

changed [] global-dotenv FOO_BAR "n***** != my-dotenv FOO_BAR ne*****

missing [] global-dotenv FB 3***** ??

Use mirror-drift --sync ... in order to declare that the two providers should represent a completely synchronized mirror (all keys, all values).

As always, the specific provider definitions are in your teller.yml file.

��

Detect secrets and value drift (graph links between providers)

Some times you want to check drift between two providers, and two unrelated keys. For example:

Provider1:

MG_PASS=foo***

Provider2:

MAILGUN_PASS=foo***

This poses a challenge. We need some way to "wire" the keys MG_PASS and MAILGUN_PASS and declare a relationship of source (MG_PASS) and destination, or sink (MAILGUN_PASS).

For this, you can label mappings as source and couple with the appropriate sink as sink, effectively creating a graph of wirings. We call this graph-drift (use same label value for both to wire them together). Then, source values will be compared against sink values in your configuration:

providers:

dotenv:

env_sync:

path: ~/my-dot-env.env

source: s1

dotenv2:

kind: dotenv

env_sync:

path: ~/other-dot-env.env

sink: s1

And run

$ teller graph-drift dotenv dotenv2 -c your-config.yml

��

Populate templates

Have a kickstarter project you want to populate quickly with some variables (not secrets though!)?

Have a production project that just has to have a file to read that contains your variables?

You can use teller to inject variables into your own templates (based on go templates).

With this template:

Hello, {{.Teller.EnvByKey "FOO_BAR" "default-value" }}!

Run:

$ teller template my-template.tmpl out.txt

Will get you, assuming FOO_BAR=Spock:

Hello, Spock!

��

Copy/sync data between providers

In cases where you want to sync between providers, you can do that with teller copy.

Specific mapping key sync

$ teller copy --from dotenv1 --to dotenv2,heroku1

This will:

- Grab all mapped values from source (

dotenv1) - For each target provider, find the matching mapped key, and copy the value from source into it

Full copy sync

$ teller copy --sync --from dotenv1 --to dotenv2,heroku1

This will:

- Grab all mapped values from source (

dotenv1) - For each target provider, perform a full copy of values from source into the mapped

env_synckey

Notes:

- The mapping per provider is as configured in your

teller.yamlfile, in theenv_syncorenvproperties. - This sync will try to copy all values from the source.

��

Write and multi-write to providers

Teller providers supporting write use cases which allow writing values into providers.

Remember, for this feature it still revolves around definitions in your teller.yml file:

$ teller put FOO_BAR=$MY_NEW_PASS --providers dotenv -c .teller.write.yml

A few notes:

- Values are key-value pair in the format:

key=valueand you can specify multiple pairs at once - When you're specifying a literal sensitive value, make sure to use an ENV variable so that nothing sensitive is recorded in your history

- The flag

--providerslets you push to one or more providers at once FOO_BARmust be a mapped key in your configuration for each provider you want to update

Sometimes you don't have a mapped key in your configuration file and want to perform an ad-hoc write, you can do that with --path:

$ teller put SMTP_PASS=newpass --path secret/data/foo --providers hashicorp_vault

A few notes:

- The pair

SMTP_PASS=newpasswill be pushed to the specified path - While you can push to multiple providers, please make sure the path semantics are the same

✅

Prompts and options

There are a few options that you can use:

carry_env - carry the environment from the parent process into the child process. By default we isolate the child process from the parent process. (default: false)

confirm - an interactive question to prompt the user before taking action (such as running a process). (default: empty)

opts - a dict for our own variable/setting substitution mechanism. For example:

opts:

region: env:AWS_REGION

stage: qa

And now you can use paths like /{{stage}}/{{region}}/billing-svc where ever you want (this templating is available for the confirm question too).

If you prefix a value with env: it will get pulled from your current environment.

YAML Export in YAML format

You can export in a YAML format, suitable for GCloud:

$ teller yaml

Example format:

FOO: "1"

KEY: VALUE

JSON Export in JSON format

You can export in a JSON format, suitable for piping through jq or other workflows:

$ teller json

Example format:

{

"FOO": "1"

}

Providers

For each provider, there are a few points to understand:

- Sync - full sync support. Can we provide a path to a whole environment and have it synced (all keys, all values). Some of the providers support this and some don't.

- Key format - some of the providers expect a path-like key, some env-var like, and some don't care. We'll specify for each.

General provider configuration

We use the following general structure to specify sync mapping for all providers:

# you can use either `env_sync` or `env` or both

env_sync:

path: ... # path to mapping

remap:

PROVIDER_VAR1: VAR3 # Maps PROVIDER_VAR1 to local env var VAR3

env:

VAR1:

path: ... # path to value or mapping

field: <key> # optional: use if path contains a k/v dict

decrypt: true | false # optional: use if provider supports encryption at the value side

severity: high | medium | low | none # optional: used for secret scanning, default is high. 'none' means not a secret

redact_with: "**XXX**" # optional: used as a placeholder swapping the secret with it. default is "**REDACTED**"

VAR2:

path: ...

Remapping Provider Variables

Providers which support syncing a list of keys and values can be remapped to different environment variable keys. Typically, when teller syncs paths from env_sync, the key returned from the provider is directly mapped to the environment variable key. In some cases it might be necessary to have the provider key mapped to a different variable without changing the provider settings. This can be useful when using env_sync for Hashicorp Vault Dynamic Database credentials:

env_sync:

path: database/roles/my-role

remap:

username: PGUSER

password: PGPASSWORD

After remapping, the local environment variable PGUSER will contain the provider value for username and PGPASSWORD will contain the provider value for password.

Hashicorp Vault

Authentication

If you have the Vault CLI configured and working, there's no special action to take.

Configuration is environment based, as defined by client standard. See variables here.

Features

- Sync -

yes - Mapping -

yes - Modes -

read+write - Key format - path based, usually starts with

secret/data/, and more generically[engine name]/data

Example Config

hashicorp_vault:

env_sync:

path: secret/data/demo/billing/web/env

env:

SMTP_PASS:

path: secret/data/demo/wordpress

field: smtp

Consul

Authentication

If you have the Consul CLI working and configured, there's no special action to take.

Configuration is environment based, as defined by client standard. See variables here.

Features

- Sync -

yes - Mapping -

yes - Modes -

read+write - Key format

env_sync- path based, we use the last segment as the variable nameenv- any string, no special requirement

Example Config

consul:

env_sync:

path: ops/config

env:

SLACK_HOOK:

path: ops/config/slack

Heroku

Authentication

Requires an API key populated in your environment in: HEROKU_API_KEY (you can fetch it from your ~/.netrc).

Features

- Sync -

yes - Mapping -

yes - Modes -

read+write - Key format

env_sync- name of your Heroku appenv- the actual env variable name in your Heroku settings

Example Config

heroku:

env_sync:

path: my-app-dev

env:

MG_KEY:

path: my-app-dev

Etcd

Authentication

If you have etcdctl already working there's no special action to take.

We follow how etcdctl takes its authentication settings. These environment variables need to be populated

ETCDCTL_ENDPOINTS

For TLS:

ETCDCTL_CA_FILEETCDCTL_CERT_FILEETCDCTL_KEY_FILE

Features

- Sync -

yes - Mapping -

yes - Modes -

read+write - Key format

env_sync- path basedenv- path based

Example Config

etcd:

env_sync:

path: /prod/billing-svc

env:

MG_KEY:

path: /prod/billing-svc/vars/mg

AWS Secrets Manager

Authentication

Your standard AWS_DEFAULT_REGION, AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY need to be populated in your environment

Features

- Sync -

yes - Mapping -

yes - Modes -

read, write: accepting PR - Key format

env_sync- path basedenv- path based

Example Config

aws_secretsmanager:

env_sync:

path: /prod/billing-svc

env:

MG_KEY:

path: /prod/billing-svc/vars/mg

AWS Paramstore

Authentication

Your standard AWS_DEFAULT_REGION, AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY need to be populated in your environment

Features

- Sync -

no - Mapping -

yes - Modes -

read, write: accepting PR - Key format

env- path baseddecrypt- available in this provider, will use KMS automatically

Example Config

aws_ssm:

env:

FOO_BAR:

path: /prod/billing-svc/vars

decrypt: true

Google Secret Manager

Authentication

You should populate GOOGLE_APPLICATION_CREDENTIALS=account.json in your environment to your relevant account.json that you get from Google.

Features

- Sync -

no - Mapping -

yes - Modes -

read, write: accepting PR - Key format

env- path based, needs to include a versiondecrypt- available in this provider, will use KMS automatically

Example Config

google_secretmanager:

env:

MG_KEY:

# need to supply the relevant version (versions/1)

path: projects/44882/secrets/MG_KEY/versions/1

.ENV (dotenv)

Authentication

No need. You'll be pointing to a one or more .env files on your disk.

Features

- Sync -

yes - Mapping -

yes - Modes -

read+write - Key format

env- env key like

Example Config

You can mix and match any number of files, sitting anywhere on your drive.

dotenv:

env_sync:

path: ~/my-dot-env.env

env:

MG_KEY:

path: ~/my-dot-env.env

Doppler

Authentication

Install the doppler cli then run doppler login. You'll also need to configure your desired "project" for any given directory using doppler configure. Alternatively, you can set a global project by running doppler configure set project <my-project> from your home directory.

Features

- Sync -

yes - Mapping -

yes - Modes -

read - Key format

env- env key like

Example Config

doppler:

env_sync:

path: prd

env:

MG_KEY:

path: prd

field: OTHER_MG_KEY # (optional)

Vercel

Authentication

Requires an API key populated in your environment in: VERCEL_TOKEN.

Features

- Sync -

yes - Mapping -

yes - Modes -

read, write: accepting PR - Key format

env_sync- name of your Vercel appenv- the actual env variable name in your Vercel settings

Example Config

vercel:

env_sync:

path: my-app-dev

env:

MG_KEY:

path: my-app-dev

CyberArk Conjur

Authentication

Requires a username and API key populated in your environment:

CONJUR_AUTHN_LOGINCONJUR_AUTHN_API_KEY

Requires a .conjurrc file in your User's home directory:

---

account: conjurdemo

plugins: []

appliance_url: https://conjur.example.com

cert_file: ""

accountis the organization account created during initial deploymentpluginswill be blankappliance_urlshould be the Base URI for the Conjur servicecert_fileshould be the public key certificate if running in self-signed mode

Features

- Sync -

nosync: accepting PR - Mapping -

yes - Modes -

read+write - Key format

env_sync- not supported to comply with least-privilege modelenv- the secret variable path in Conjur Secrets Manager

Example Config

cyberark_conjur:

env:

DB_USERNAME:

path: /secrets/prod/pgsql/username

DB_PASSWORD:

path: /secrets/prod/pgsql/password

Semantics

Addressing

- Stores that support key-value interfaces:

pathis the direct location of the value, no need for theenvkey orfield. - Stores that support key-valuemap interfaces:

pathis the location of the map, while theenvkey orfield(if exists) will be used to do the additional final addressing. - For env-sync (fetching value map out of a store), path will be a direct pointer at the key-valuemap

Errors

- Principle of non-recovery: where possible an error is return when it is not recoverable (nothing to do), but when it is -- providers should attempt recovery (e.g. retry API call)

- Missing key/value: it's possible that when trying to fetch value in a provider - it's missing. This is not an error, rather an indication of a missing entry is returned (

EnvEntry#IsFound)

Security Model

Project Practices

- We

vendorour dependencies and push them to the repo. This creates an immutable, independent build, that's also free from risks of fetching unknown code in CI/release time.

Providers

For every provider, we are federating all authentication and authorization concern to the system of origin. In other words, if for example you connect to your organization's Hashicorp Vault, we assume you already have a secure way to do that, which is "blessed" by the organization.

In addition, we don't offer any way to specify connection details to these systems in writing (in configuration files or other), and all connection details, to all providers, should be supplied via environment variables.

That allows us to keep two important points:

- Don't undermine the user's security model and threat modeling for the sake of productivity (security AND productivity CAN be attained)

- Don't encourage the user to do what we're here for -- save secrets and sensitive details from being forgotten in various places.

Developer Guide

- Quick testing as you code:

make lint && make test - Checking your work before PR, run also integration tests:

make integration

Testing

Testing is composed of unit tests and integration tests. Integration tests are based on testcontainers as well as live sandbox APIs (where containers are not available)

- Unit tests are a mix of pure and mocks based tests, abstracting each provider's interface with a custom client

- View integration tests

To run all unit tests without integration:

$ make test

To run all unit tests including container-based integration:

$ make integration

To run all unit tests including container and live API based integration (this is effectively all integration tests):

$ make integration_api

Running all tests:

$ make test

$ make integration_api

Linting

Linting is treated as a form of testing (using golangci, configuration here), to run:

$ make lint

Thanks:

To all Contributors - you make this happen, thanks!

Copyright

Copyright (c) 2021 @jondot. See LICENSE for further details.

-

摘要: The coupling between electronic and nuclear variables is a key consideration in molecular dynamics and spectroscopy. However, simulations that include detailed vibronic coupling terms are challeng

-

去中心化借贷项目Teller Finance宣布将于10月推出带有挖矿功能的非托管无抵押借贷平台。该平台将为出借方提供利息的同时,还为其提供两种流动性挖矿奖励TLR和COMP代币。资本投资将锁定三个月,并于同一时期内分发奖励。机构投资者的投资上限为1000万美元,最多赚取TLR供应总量的1%。Teller已经从机构投资者和DeFi Alliance获得了价值800万美元的DAI和USDC流动资金。

-

每个人都是讲故事的人,只是每个人的故事在不同的地方讲不同的故事,讲给不同的人听。。。。人的一生就是一个故事。。。有人的故事平淡无味,有人的故事精彩绝伦。。。 我想讲我自己的故事,精彩的故事。。。用自己的生命和青春讲述自己的故事。。。不管它是平淡还是精彩,继续讲下去总有人会欣赏这个故事。。。。我的一生。。。

-

摘要: The Jahn–Teller effect in the electronic ground state of theP4+radical cation, which is one of the strongestE×eJahn–Teller effects known in nature, has been revisited in this work with computation

-

摘要: It is argued that pseudo closed-shell fullerenes (those with two electrons in every occupied bonding orbital but with a bonding LUMO in Hückel theory) often fulfil the conditions for second-order

-

算命师 我的朋友迈克是个算命师。他经常会告诉我许多有关我前途 的事。首先,我明年就要当爸爸了。然后,我会获得提升并且挣 大钱。两年后,我会买部新车。之后没多久,我就会有能力买幢 新房子。我并不迷信,但我希望他说的是真的。 My friends Mike is a fortune-teller. He often tells me many things about my future. First

-

It was a hot,airless afternoon.The train was slow and the next stop was nearly an hour away.The people in the train were hot and tired.There were three small children and their aunt,and a tall man,who