如何使用Apache Beam中的运行时值提供程序写入大查询?

编辑:我使用beam.io.writeTobigQuery并打开了sink实验选项。我实际上已经打开了它,但我的问题是,我试图从str()包装的两个变量(dataset+table)“构建”完整的表引用。这是将整个值提供程序参数数据作为一个字符串,而不是调用get()方法只获取值。

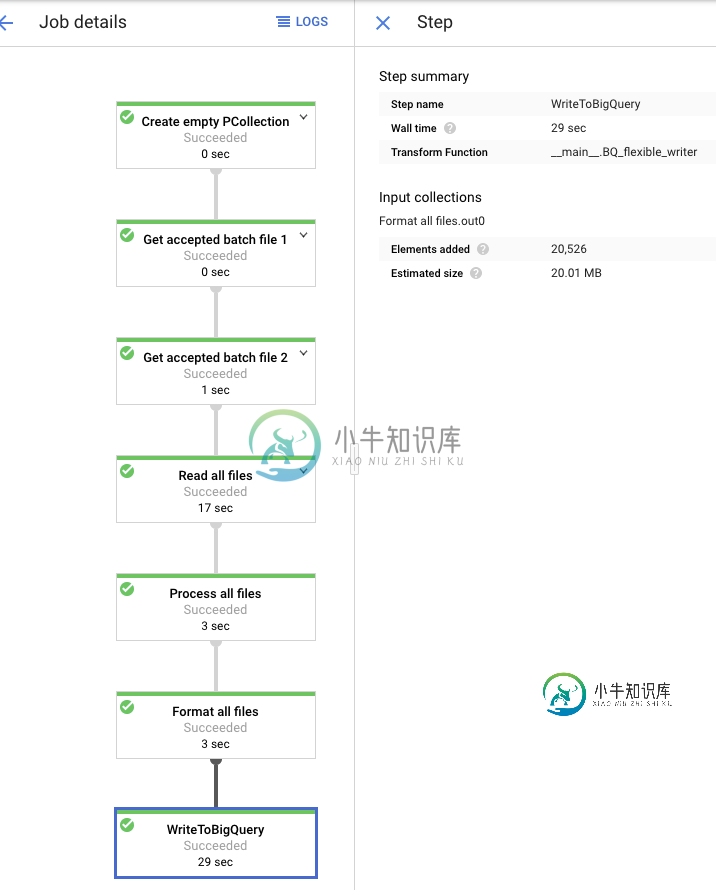

我试图生成一个数据流模板,然后从GCP云函数调用。(作为参考,我的数据流作业应该读取一个文件,其中有一堆文件名,然后从GCS读取所有这些文件名,并将其写入BQ)。因此,我需要以这样一种方式编写它,以便能够使用运行时值提供程序传递BigQuery数据集/表。

在我的帖子的底部是我的代码,省略了一些与问题无关的东西。特别要注意BQ_flexible_writer(beam.dofn)--这是我试图“定制”beam.io.writeTobigQuery的地方,以便它接受运行时值提供程序。

我的模板生成很好,当我在不提供运行时变量的情况下测试运行管道时(依赖于默认值),它会成功,并且在控制台中查看作业时看到添加的行。但是,当检查BigQuery时,没有数据(tripple检查了日志中的数据集/表名是否正确)。不确定它去了哪里,或者我可以添加什么日志来理解元素发生了什么?

#=========================================================

class BQ_flexible_writer(beam.DoFn):

def __init__(self, dataset, table):

self.dataset = dataset

self.table = table

def process(self, element):

dataset_res = self.dataset.get()

table_res = self.table.get()

logging.info('Writing to table: {}.{}'.format(dataset_res,table_res))

beam.io.WriteToBigQuery(

#dataset= runtime_options.dataset,

table = str(dataset_res) + '.' + str(table_res),

schema = SCHEMA_ADFImpression,

project = str(PROJECT_ID), #options.display_data()['project'],

create_disposition = beam.io.BigQueryDisposition.CREATE_IF_NEEDED, #'CREATE_IF_NEEDED',#create if does not exist.

write_disposition = beam.io.BigQueryDisposition.WRITE_APPEND #'WRITE_APPEND' #add to existing rows,partitoning

)

# https://cloud.google.com/dataflow/docs/guides/templates/creating-templates#valueprovider

class FileIterator(beam.DoFn):

def __init__(self, files_bucket):

self.files_bucket = files_bucket

def process(self, element):

files = pd.read_csv(str(element), header=None).values[0].tolist()

bucket = self.files_bucket.get()

files = [str(bucket) + '/' + file for file in files]

logging.info('Files list is: {}'.format(files))

return files

# https://stackoverflow.com/questions/58240058/ways-of-using-value-provider-parameter-in-python-apache-beam

class OutputValueProviderFn(beam.DoFn):

def __init__(self, vp):

self.vp = vp

def process(self, unused_elm):

yield self.vp.get()

class RuntimeOptions(PipelineOptions):

@classmethod

def _add_argparse_args(cls, parser):

parser.add_value_provider_argument(

'--dataset',

default='EDITED FOR PRIVACY',

help='BQ dataset to write to',

type=str)

parser.add_value_provider_argument(

'--table',

default='EDITED FOR PRIVACY',

required=False,

help='BQ table to write to',

type=str)

parser.add_value_provider_argument(

'--filename',

default='EDITED FOR PRIVACY',

help='Filename of batch file',

type=str)

parser.add_value_provider_argument(

'--batch_bucket',

default='EDITED FOR PRIVACY',

help='Bucket for batch file',

type=str)

#parser.add_value_provider_argument(

# '--bq_schema',

#default='gs://dataflow-samples/shakespeare/kinglear.txt',

# help='Schema to specify for BQ')

#parser.add_value_provider_argument(

# '--schema_list',

#default='gs://dataflow-samples/shakespeare/kinglear.txt',

# help='Schema in list for processing')

parser.add_value_provider_argument(

'--files_bucket',

default='EDITED FOR PRIVACY',

help='Bucket where the raw files are',

type=str)

parser.add_value_provider_argument(

'--complete_batch',

default='EDITED FOR PRIVACY',

help='Bucket where the raw files are',

type=str)

#=========================================================

def run():

#====================================

# TODO PUT AS PARAMETERS

#====================================

JOB_NAME_READING = 'adf-reading'

JOB_NAME_PROCESSING = 'adf-'

job_name = '{}-batch--{}'.format(JOB_NAME_PROCESSING,_millis())

pipeline_options_batch = PipelineOptions()

runtime_options = pipeline_options_batch.view_as(RuntimeOptions)

setup_options = pipeline_options_batch.view_as(SetupOptions)

setup_options.setup_file = './setup.py'

google_cloud_options = pipeline_options_batch.view_as(GoogleCloudOptions)

google_cloud_options.project = PROJECT_ID

google_cloud_options.job_name = job_name

google_cloud_options.region = 'europe-west1'

google_cloud_options.staging_location = GCS_STAGING_LOCATION

google_cloud_options.temp_location = GCS_TMP_LOCATION

#pipeline_options_batch.view_as(StandardOptions).runner = 'DirectRunner'

# # If datflow runner [BEGIN]

pipeline_options_batch.view_as(StandardOptions).runner = 'DataflowRunner'

pipeline_options_batch.view_as(WorkerOptions).autoscaling_algorithm = 'THROUGHPUT_BASED'

#pipeline_options_batch.view_as(WorkerOptions).machine_type = 'n1-standard-96' #'n1-highmem-32' #'

pipeline_options_batch.view_as(WorkerOptions).max_num_workers = 10

# [END]

pipeline_options_batch.view_as(SetupOptions).save_main_session = True

#Needed this in order to pass table to BQ at runtime

pipeline_options_batch.view_as(DebugOptions).experiments = ['use_beam_bq_sink']

with beam.Pipeline(options=pipeline_options_batch) as pipeline_2:

try:

final_data = (

pipeline_2

|'Create empty PCollection' >> beam.Create([None])

|'Get accepted batch file 1/2:{}'.format(OutputValueProviderFn(runtime_options.complete_batch)) >> beam.ParDo(OutputValueProviderFn(runtime_options.complete_batch))

|'Get accepted batch file 2/2:{}'.format(OutputValueProviderFn(runtime_options.complete_batch)) >> beam.ParDo(FileIterator(runtime_options.files_bucket))

|'Read all files' >> beam.io.ReadAllFromText(skip_header_lines=1)

|'Process all files' >> beam.ParDo(ProcessCSV(),COLUMNS_SCHEMA_0)

|'Format all files' >> beam.ParDo(AdfDict())

#|'WriteToBigQuery_{}'.format('test'+str(_millis())) >> beam.io.WriteToBigQuery(

# #dataset= runtime_options.dataset,

# table = str(runtime_options.dataset) + '.' + str(runtime_options.table),

# schema = SCHEMA_ADFImpression,

# project = pipeline_options_batch.view_as(GoogleCloudOptions).project, #options.display_data()['project'],

# create_disposition = beam.io.BigQueryDisposition.CREATE_IF_NEEDED, #'CREATE_IF_NEEDED',#create if does not exist.

# write_disposition = beam.io.BigQueryDisposition.WRITE_APPEND #'WRITE_APPEND' #add to existing rows,partitoning

# )

|'WriteToBigQuery' >> beam.ParDo(BQ_flexible_writer(runtime_options.dataset,runtime_options.table))

)

except Exception as exception:

logging.error(exception)

pass

共有1个答案

请使用以下附加选项运行此选项。

--experiment=use_beam_bq_sink

否则,Dataflow当前将使用不支持ValueProviders的本机版本重写BigQuery接收器。

此外,请注意,不支持将数据集设置为运行时参数。尝试将表参数指定为整个表引用(dataset.table或project:dataset.table)。

-

我试图在Apache Beam中使用BigtableIO的运行时参数来写入BigTable。 我创建了一个从 BigQuery 读取并写入 Bigtable 的管道。当我提供静态参数时,管道工作正常(使用 ConfigBigtableIO 和 ConfigBigtableConfiguration,请参阅此处的示例 - https://github.com/GoogleCloudPlatform/

-

问题内容: 我并不是要特别解决任何问题,而是要努力学习球衣。 我有一个标记为这样的实体类: 以及相应的球衣服务 给出正确的XML响应。假设我想编写一个MessageBodyWriter,它复制相同的行为,并产生一个XML响应,我该怎么做? 通过使用@Provider批注进行标记,我可以看到邮件正文编写器已正确调用。 当调用writeTo时,对象o是一个Vector,类型GenericType是一个

-

suite name=“knowledgetest”verbose=“5”configfailurepolicy=“continue”data-provider-thread-count=“10”parallel=“methods”thread-count=“5”

-

我想为我的客户和API建立契约测试。我的API不能在本地运行,所以我希望能够在部署到生产之前,针对已部署的API临时版本运行提供程序测试。 我在网上看到的提供程序测试的大多数示例都使用了localhost。当尝试对我部署的HTTPSendpoint运行提供程序测试时,测试失败,显示。是不支持HTTPS协议,还是我遗漏了什么? 使用pact-provider-verifier cmd line工具工

-

如果我创建一个提供者并将其绑定到一个类,就像这样 然后

-

我是一个新的pact学习者,我想知道当提供程序验证时我应该输入什么 对于提供程序验证,我应该将提供的目标填充为本地主机,或者代替本地主机,我也可以输入实际环境的主机?哪种场景最适合合同测试?